In 2015, a new artificial intelligence laboratory was announced with an unusual premise.

It would not operate as a conventional company. It would not pursue advantage in the usual way. Its stated purpose was to develop advanced forms of artificial intelligence in a manner that would benefit humanity as a whole.

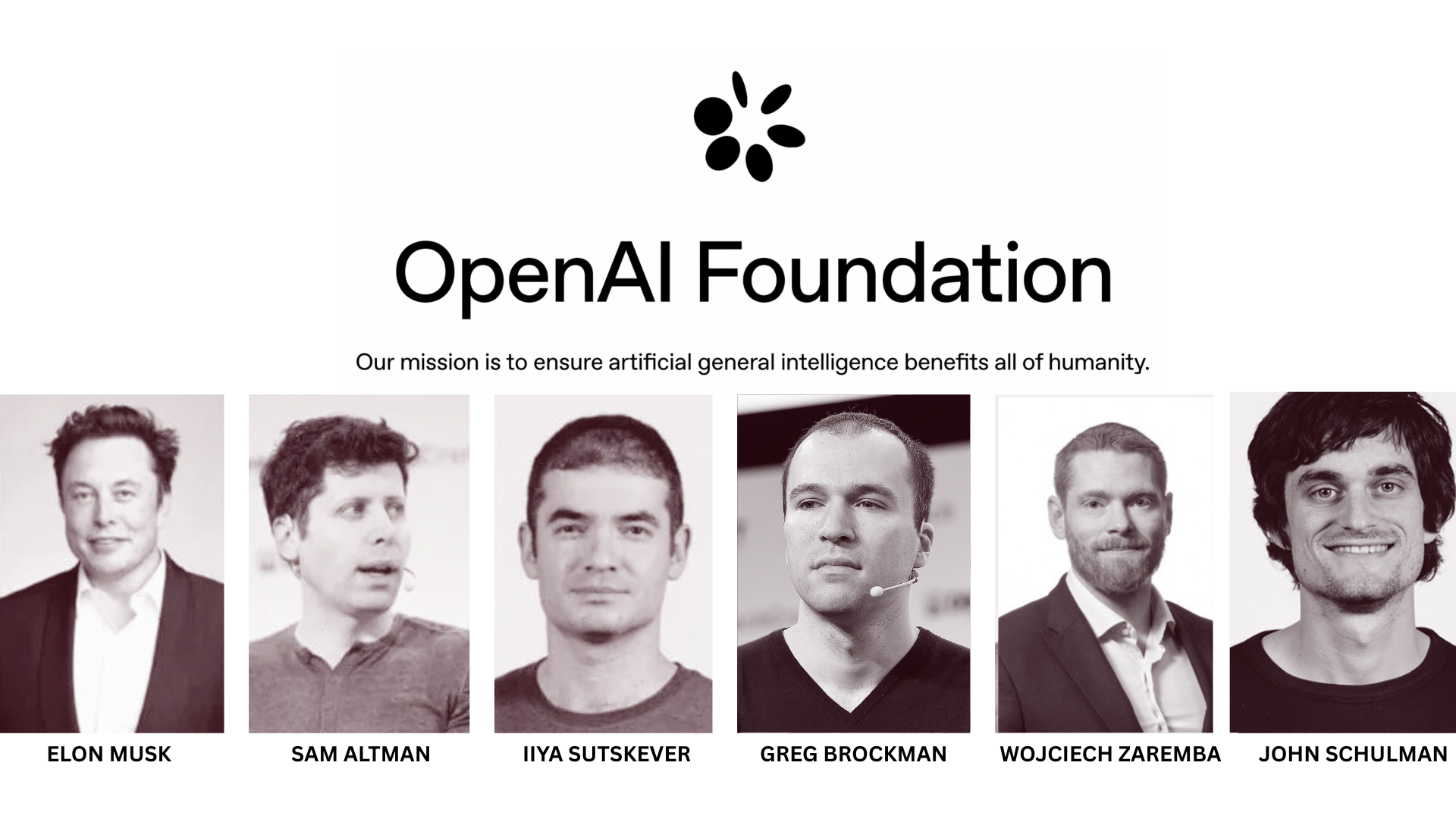

The organisation was named OpenAI. Among those involved in its founding were Elon Musk, Sam Altman, Greg Brockman, Ilya Sutskever and John Schulman. A pledge of one billion dollars accompanied its launch, placing it immediately among the more ambitious efforts in the field.

At that time, progress in artificial intelligence was accelerating. Advances in deep learning, including systems such as AlexNet in 2012 and the developments that would lead to AlphaGo between 2014 and 2016, had begun to demonstrate what large scale models could achieve. Much of this work was taking place within large technology companies. Research was increasingly concentrated within organisations such as Google through DeepMind, Meta through its AI division, and Microsoft.

This concentration raised concerns. If the most capable systems were developed within a small number of organisations, their direction would be shaped by commercial priorities. There was a risk that powerful systems would be controlled by a limited set of interests. At the same time, there were warnings about the potential consequences of more advanced forms of artificial intelligence, particularly artificial general intelligence. Some argued that systems of that kind, if developed without restraint, could be misused for purposes such as surveillance, manipulation or military application.

Another concern was the lack of open collaboration. Many of the most significant advances were not shared widely. Research was often held within private organisations, limiting broader scrutiny and participation. The stated intention behind OpenAI addressed this directly. It proposed that research should be conducted openly where possible, that findings should be shared, and that the development of advanced systems should take place with an explicit focus on safety and long term outcomes.

In its early years, the organisation focused on problems that could be tested and measured. Work in reinforcement learning led to systems that improved through repeated interaction with simulated environments. These systems learned by playing against themselves, refining their strategies over time. In 2017, this approach produced a system known as OpenAI Five, which defeated professional players in the game Dota 2, a complex strategy environment requiring coordination and long term planning.

At the same time, research in natural language processing began to take shape. Work on transformer based models contributed to the development of systems capable of generating and understanding text with increasing fluency. Early versions demonstrated the ability to produce coherent language across extended passages, though the limits of this capability were still being explored.

Alongside this work, the organisation invested in research on alignment and safety. The aim was to ensure that future systems would behave in ways that were helpful, reliable and consistent with human values. This included efforts to understand how advanced systems might be guided and constrained as their capabilities increased.

By 2019, a practical difficulty had become clear. Training large models required substantial computational resources. The cost of building and operating these systems extended beyond what could be sustained within a purely non profit structure. In response, OpenAI introduced a revised arrangement, forming a capped profit entity known as OpenAI LP. This allowed it to attract investment while maintaining a limit on financial returns.

A partnership with Microsoft followed, bringing an investment of one billion dollars and access to large scale cloud infrastructure through Azure. This infrastructure became central to the training of subsequent models.

In 2020, the organisation released GPT-3, a system notable for its scale and its ability to generate text that was often coherent and contextually appropriate. It was applied to a range of tasks, including writing, translation and code generation. The release marked a shift from research demonstration to wider application.

In 2022, ChatGPT brought these capabilities into a conversational form. Users were able to interact directly with a language model, receiving responses that followed the structure of dialogue. Adoption was rapid, with the system reaching one hundred million users within two months. Around the same period, models such as DALL·E extended these methods into image generation, producing visual outputs from written prompts. Work on systems such as Codex applied similar techniques to programming tasks.

These developments were accompanied by renewed scrutiny. Some critics argued that the move towards a capped profit structure and closer partnerships with large technology companies sat uneasily with the organisation’s original aims. Concerns were also raised about bias, misinformation and the broader effects of deploying such systems at scale. In response, the organisation continued its work on alignment and safety, seeking to address these issues as capabilities expanded.

The influence of OpenAI has been significant. Its language models have made artificial intelligence more accessible to a wide range of users, including businesses, educators, developers and individuals. Its research has contributed to advances in natural language processing, reinforcement learning and the broader discussion of how such systems should be governed. Its work has also encouraged competition, with other organisations accelerating their own efforts in response.

The direction of development remains active. The organisation continues to state an interest in artificial general intelligence, alongside a focus on safety, alignment and expanded capability. Work continues on systems that operate across different forms of data, including text, images and audio, and on applications in areas such as coding and robotics.

The position it now occupies is not entirely straightforward. It began as a non profit research laboratory with an emphasis on openness and shared benefit. It now operates as a developer of widely used systems with substantial computational requirements and external partnerships. The distance between these roles is not always clear.

The intention set out at its founding remains present, though it has been tested by the scale of what followed. Whether that intention can be maintained as systems become more capable is not yet settled. What is clear is that the organisation has played a central role in shaping how artificial intelligence is developed and used, and that its influence continues to extend beyond the terms on which it was first introduced.