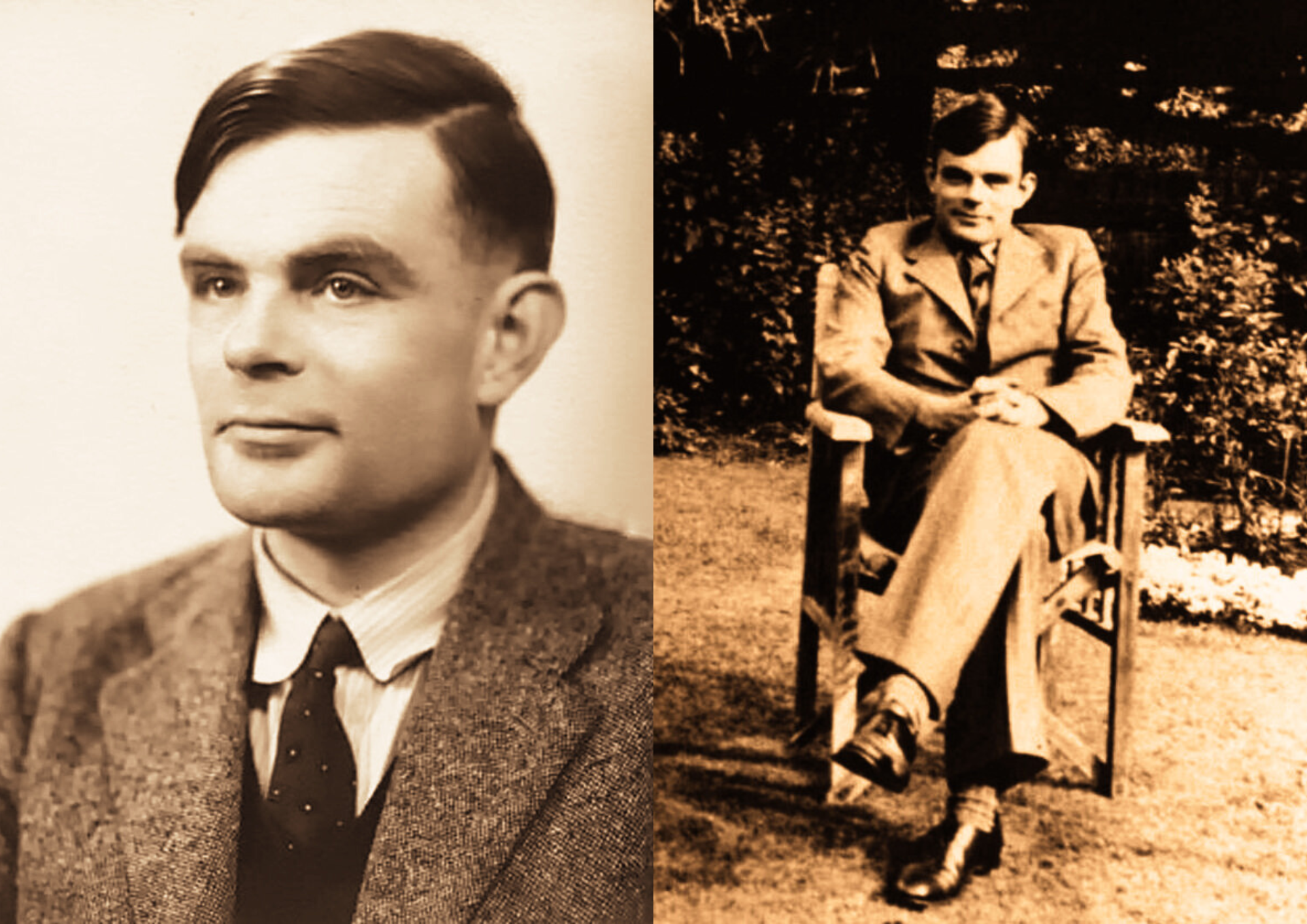

In 1950, British mathematician and computer scientist Alan Turing published a paper that did not simply advance a field, it quietly unsettled it. Writing in the journal Mind, he posed a question that seemed, at first glance, almost childlike in its simplicity.

Can machines think.

It was not asked lightly. Nor was it answered directly.

Instead, in Computing Machinery and Intelligence, Turing reframed the problem in a manner that would outlast the debate itself. If thinking could not be cleanly defined, perhaps it could be approached from the outside. Perhaps it could be observed.

From that shift, an entire discipline began to take form.

Turing had already demonstrated, during the war, that machines could perform tasks once thought to require human ingenuity. His work on the German Enigma cipher had established him as a figure of rare practical intelligence. Yet his postwar interests moved in a different direction. He was less concerned with what machines could calculate than with what they might become.

At the time, computers were austere instruments. They calculated, they stored, they followed instruction. The notion that they might engage in conversation, let alone display something resembling intelligence, was treated as speculation at best.

Turing did not treat it as speculation.

He proposed what he called the imitation game, now widely known as the Turing Test. Its structure was disarmingly simple. A human judge would conduct a text based conversation with two unseen participants. One would be human. The other, a machine. If the judge could not reliably distinguish between them, the machine would be said to exhibit intelligence.

The elegance of the idea lay in what it avoided. Turing did not attempt to define thought, consciousness, or understanding. He sidestepped them entirely. The question was no longer whether a machine truly thought, but whether it could behave in a way that was indistinguishable from one that did.

It was, in effect, a journalist’s test. Not what something is, but what it can convincingly pass as.

From there, the implications unfold almost of their own accord.

If intelligence could be imitated, then it could be engineered. If it could be engineered, then it could be studied. And if it could be studied, then it belonged not to philosophy alone, but to science.

Turing’s predictions followed the same line of reasoning. He suggested that machines might one day learn from experience, a notion that would later surface in what is now called machine learning. He anticipated that, within decades, computers might succeed in misleading human judges in short conversations. He also recognised, with a clarity that has aged rather well, that the principal objections would not be technical, but philosophical.

They have been.

The decades that followed saw early attempts to bring his ideas into practice. Systems such as ELIZA and PARRY demonstrated that even limited pattern matching could produce the impression of conversation. Later developments, including Deep Blue, showed that machines could outperform humans in structured domains. More recent systems, produced by firms such as Google and others, have extended this into language itself.

And yet, the central question remains unsettled.

The Turing Test, for all its influence, has never escaped criticism. It measures imitation, not understanding. A system may pass by appearing human without possessing any genuine comprehension. It rewards plausibility over truth. One might say it measures performance rather than substance.

Philosophers have taken issue with this. John Searle’s Chinese Room argument challenged the idea that symbol manipulation could ever amount to understanding. Others have proposed alternative tests, attempting to measure context, reasoning, or grounding in reality rather than conversational fluency.

None have displaced Turing’s formulation.

Partly because it is so difficult to improve upon. Partly because it exposes, rather than resolves, the difficulty at the heart of the matter.

What does it mean to think.

Turing himself did not claim to have answered that question. He merely showed that it might be approached indirectly, through behaviour rather than definition. That alone was enough to redirect the course of research.

Today, his influence is everywhere, though often unacknowledged. Systems that process language, recognise images, or make decisions under uncertainty all operate within a framework he helped define. The very notion that intelligence can be simulated rests, in part, on his argument.

There is, too, a certain restraint in his work that is often missing from later discussions. He did not promise imminent breakthroughs. He did not insist that machines would become human. He suggested, instead, that the boundary might blur.

It has, though not entirely.

Machines now write, respond, assist, and recommend. They produce language that, in many cases, would have satisfied the conditions of his test. Yet the suspicion remains that something essential has been imitated rather than achieved.

That tension is precisely what gives the question its durability.

Can machines think.

More than seventy years on, it has not been settled. It has simply been reframed, again and again, in the light of each new advance.

And that, one suspects, would not have surprised Turing in the least.