Before AI music could be measured, it had to be defined.

There is a point, rarely noticed at the time, when a new technology stops being described loosely and begins to take shape in language that can be used, tested and applied.

For artificial intelligence in music, that moment may now be arriving.

In early 2026, The Sonic Intelligence Academy released its first quarterly AI Music Intelligence Report. It does not attempt to announce a new form of music. That had already begun to emerge. Instead, it addresses a quieter problem that has sat beneath the public discussion. What exactly counts as AI music.

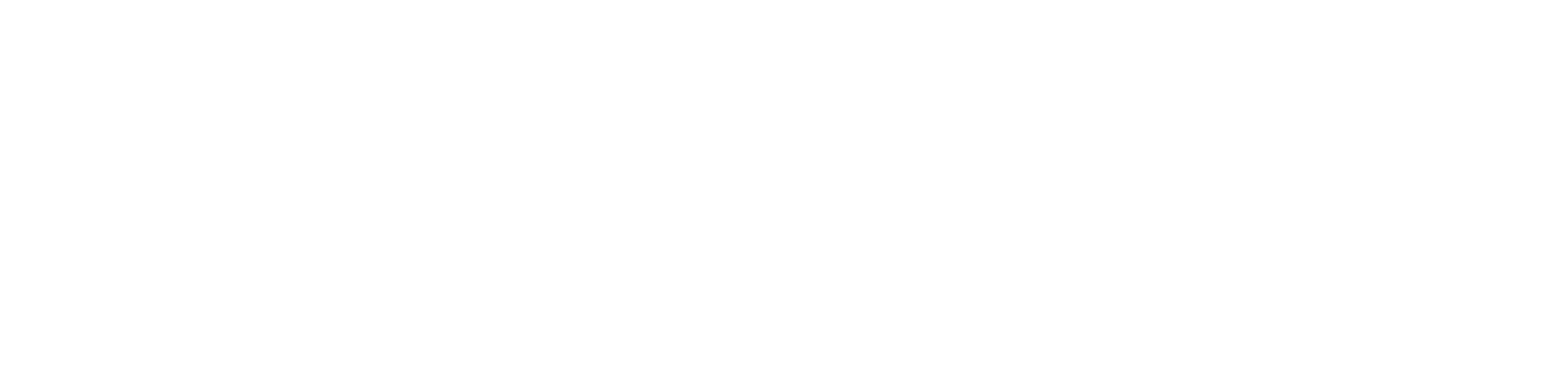

Until now, most references have been imprecise. A track produced entirely by a generative system is often placed in the same category as a song written, performed or arranged by a human using AI tools. The two processes are treated as one. The report begins from the position that they are not.

Its central contribution is a classification framework. It divides AI music into three categories, designed not as theory but as working definitions that artists themselves can apply.

The first describes work in which human and AI contributions are combined. A songwriter may compose lyrics while relying on AI for production, or perform vocals over machine generated arrangements. The defining feature is that a human remains responsible for one or more core creative roles.

The second category addresses a more specific development. It applies where artists use AI to replicate or extend their own voice. Here, authorship is neither fully traditional nor fully synthetic. The voice is recognisable, but the process of producing it has changed.

The third category is the most complete form of automation. Melody, lyrics, production and arrangement are generated by AI systems, with the human role limited to directing and selecting outputs.

The framework is intended to be used beyond the report itself. It is presented as an open standard, available to record labels, platforms and rights organisations. Its inclusion in The Metrics of Music, a reference text used within the industry, suggests that it has already begun to move into wider circulation.

Alongside the definitions, the report offers an early view of how AI is actually being used. The results do not align with the dominant assumption. Only 19 per cent of submitted tracks were classified as fully generated by machines. The remaining 81 per cent involved some form of collaboration between human and AI.

That proportion introduces a complication. If most work in this category still depends on human direction and contribution, then policies that treat all AI music as equivalent risk misunderstanding what is being produced. The distinction is not technical. It reaches into questions of credit, ownership and value.

The report does not attempt to resolve those questions. It does something more limited, and perhaps more significant. It establishes a language through which they can be approached.

Moments like this rarely present themselves as turning points. There is no clear break, no announcement that a threshold has been crossed. Instead, a framework appears, categories are set out, and over time they begin to shape how a field is understood.

Before AI music could be measured, it had to be defined. This is one of the first attempts to do so with any precision.