A move was played in Seoul that did not look correct.

It came early in the second game. Those watching assumed it was an error. It did not follow established patterns, and it did not resemble the accumulated judgement of players who had studied the game for decades.

The move held.

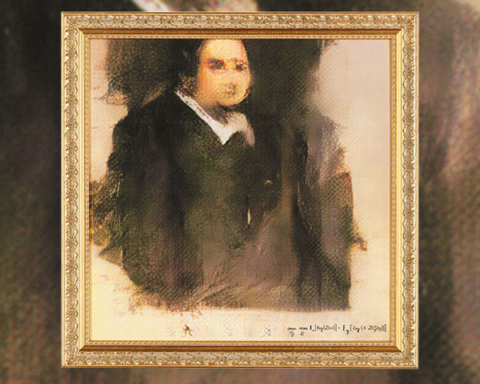

The match took place in March 2016 between AlphaGo, developed by DeepMind, and Lee Sedol, a nine dan grandmaster and eighteen time world champion, widely regarded as one of the strongest players of his generation. It was played in Seoul, South Korea, over five games, with a prize of one million dollars. It was watched by millions, in part because the game itself had long been considered beyond the reach of machines.

Go has been played for more than 2,500 years. It is often described as the most complex strategy game in existence, not only because of the number of possible positions, but because of how those positions are understood. Chess presents around 10 to the power of 123 possible configurations. Go presents around 10 to the power of 170, a number that exceeds estimates of the number of atoms in the observable universe. A brute force search of this space is not practical.

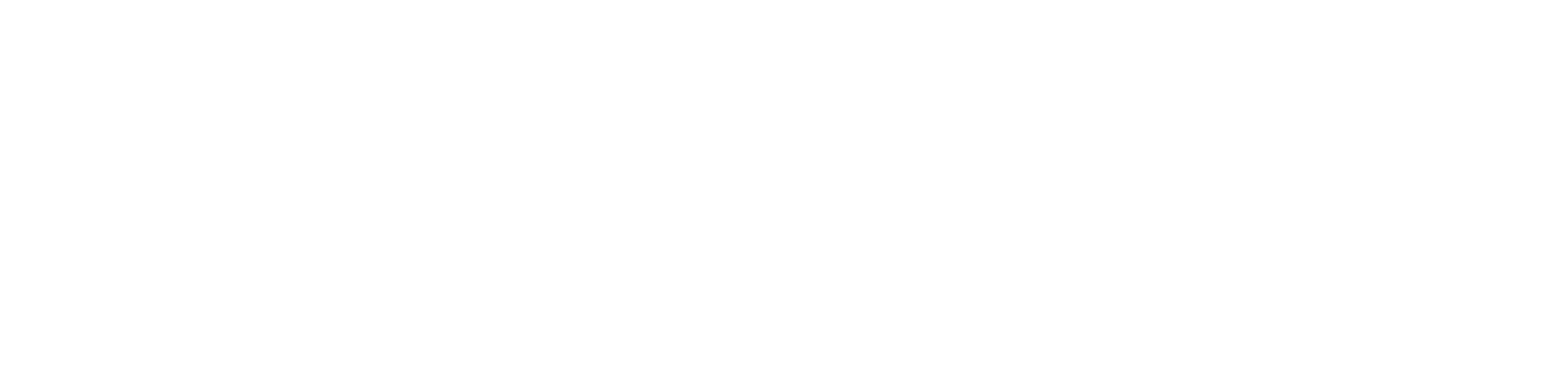

The difficulty is not only scale. In chess, positions can be evaluated using relatively clear measures, with pieces assigned values and positions assessed accordingly. In Go, the situation is more abstract. Advantage is not easily reduced to numbers. Strong play depends on pattern recognition, long term planning and a form of judgement often described by players as intuition or feel. Before 2016, many researchers believed that this form of reasoning would remain difficult to reproduce in a machine.

Earlier systems had relied on calculation. They searched through possible moves, extending lines of play forward and selecting the most promising outcome. This approach had been effective in chess, but it struggled in Go. The number of possible moves was too large, and the absence of simple evaluation made it difficult to determine which positions were favourable.

AlphaGo approached the problem differently. It was trained using deep reinforcement learning and neural networks rather than relying solely on search. Two networks were used. A policy network predicted which moves were most likely to be effective based on patterns learned from previous games. A value network evaluated positions, estimating the long term advantage of a given board state. These networks were trained not only on recorded human games, but also through self play. The system played millions of games against itself, refining its decisions through repetition and feedback.

To guide its play, AlphaGo also used a method known as Monte Carlo Tree Search. Rather than exploring every possible move, it focused on the most promising lines, simulating outcomes and selecting moves that led to stronger positions over time. This allowed it to operate efficiently within a space that could not be searched exhaustively.

The match began on 9 March 2016. In the first game, AlphaGo played with a level of strength that surprised both Lee Sedol and those observing. Sedol later described himself as shocked. The result was a win for the machine.

The second game, played on 10 March, contained the move that drew wider attention. On the thirty seventh turn, AlphaGo played a move that was initially judged by experienced commentators to be a mistake. It appeared to violate established principles of play. As the game continued, it became clear that the move had altered the structure of the board in a way that was both effective and unexpected. It has since been studied as an example of a line of play that had not previously been considered.

The third game, played on 12 March, was more decisive. AlphaGo maintained control of the position, and with that win secured the match. At three games to none, the outcome was settled.

The fourth game, on 13 March, introduced a different result. Lee Sedol played a move on the seventy eighth turn that disrupted AlphaGo’s position. The system struggled to respond, made errors, and eventually lost. This was the only game AlphaGo lost in the match, demonstrating that it remained capable of failure under certain conditions.

The fifth game, on 15 March, returned to the earlier pattern. The play was complex, but AlphaGo was able to outmanoeuvre its opponent in the later stages. The final score was four games to one.

The result marked the first time a machine had defeated a world class Go player in a full match. More significantly, it showed that a system could operate in a domain that had been considered dependent on human intuition. AlphaGo did not rely on exhaustive calculation. It developed a form of strategic judgement through training and self play.

The implications extended beyond the game. The method used to train AlphaGo was applied to other problems. Systems based on similar principles were used in robotics, in autonomous systems and in scientific research. Later versions of the system were developed. AlphaGo Zero, introduced in 2017, learned the game without using human data. AlphaZero, introduced in 2018, applied the same approach to Go, chess and shogi. MuZero, introduced in 2020, learned to play without being given the rules in advance.

The match also altered how artificial intelligence was understood. It demonstrated that a machine could produce moves that appeared creative, not because it was imitating a known pattern, but because it had discovered a strategy through its own training. This suggested that such systems could contribute to knowledge rather than simply apply it.

The outcome did not resolve the question of how far this approach could be extended. It did, however, establish that the boundary between calculation and judgement was less fixed than previously assumed. The move that appeared incorrect at first did not end the discussion. It marked a point at which a different form of reasoning became visible.