In 2012, an image recognition system reduced its error rate by a margin that did not fit the pattern of previous years.

The result appeared in the ImageNet Large Scale Visual Recognition Challenge, an annual competition that had become a measure of progress in computer vision. Models were asked to classify images across one thousand categories, drawing from a dataset of more than 1.2 million labelled examples. Performance was assessed using the top five error rate, which measured how often the correct answer failed to appear among the model’s five most likely predictions.

Until that point, improvement had been gradual. The best systems in 2011 recorded an error rate of around 25.8 per cent. Progress was limited by the methods in use. Models struggled with variation in lighting, overlapping objects and the complexity of real images. Feature extraction was largely manual, requiring researchers to define in advance which aspects of an image should be recognised.

In 2012, a model known as AlexNet reduced the error rate to 16.4 per cent. The scale of that change was difficult to ignore.

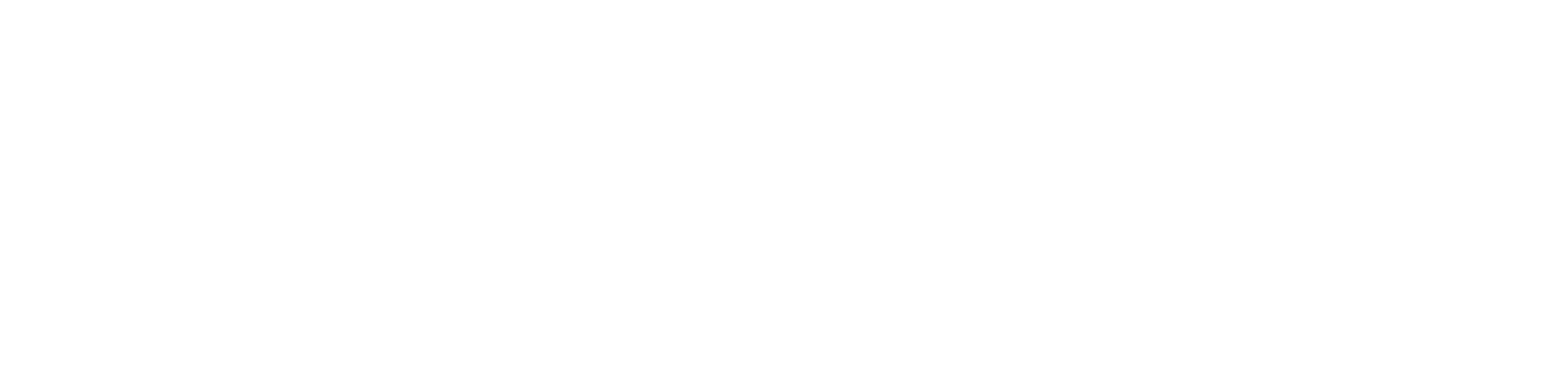

The system had been developed at the University of Toronto by Geoffrey Hinton, Alex Krizhevsky and Ilya Sutskever. It was built as a deep convolutional neural network, using a structure that extended to eight layers, significantly deeper than most previous models. Rather than relying on predefined features, it learned to identify edges, shapes and textures directly from the data.

Several technical decisions contributed to its performance. The model used a form of activation known as the rectified linear unit, which replaced slower functions such as sigmoid and hyperbolic tangent. This reduced training time and allowed the network to learn more efficiently. It was also trained using two NVIDIA GTX 580 graphics processors, achieving speeds that were reported to be up to one hundred times faster than those obtained using standard central processing units. This demonstrated that deep learning could be made practical through the use of suitable hardware.

To improve its ability to generalise, the system applied data augmentation, altering images through transformations such as flipping, rotation and adjustment. It also used a technique known as dropout, in which portions of the network were temporarily disabled during training to reduce overfitting. These methods allowed the model to perform more reliably on new data.

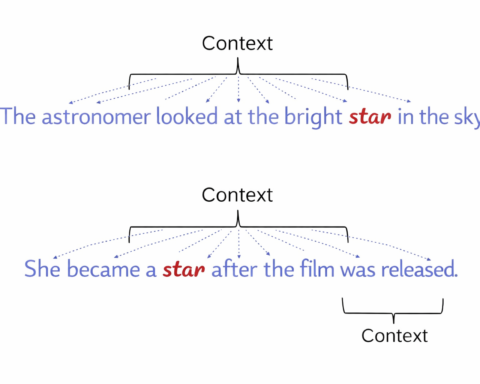

The result was not simply a better score. It indicated a change in method. Earlier approaches required careful design of features, with researchers determining in advance how images should be interpreted. AlexNet shifted this process into the model itself. Feature extraction became something the system learned, rather than something imposed from outside.

The effect was immediate. Deep learning, which had been regarded by some as impractical due to training limitations, began to attract wider attention. The use of neural networks expanded, and research activity increased. The demand for computational power grew alongside it, contributing to the development of specialised hardware. Graphics processing units and later tensor processing units became central to the training of large models.

The influence extended beyond image recognition. Techniques developed in this context were applied to other areas, including natural language processing, robotics and autonomous systems. Systems for facial recognition, medical imaging and object detection drew on similar approaches. The development of self driving technology relied on related methods for interpreting visual input in real time.

Subsequent models continued the trend. In 2014, systems such as InceptionNet improved performance further. In 2015, ResNet achieved results that approached human level accuracy in image classification. In 2016, methods based on deep reinforcement learning were applied in systems such as AlphaGo. In 2017, transformer models altered the way language was processed. By the 2020s, artificial intelligence had become embedded across industries including healthcare, finance and transport.

The shift that began in 2012 is often described as the start of the modern deep learning era. It led to changes in how models were designed, how they were trained and how they were deployed. It also altered how people interacted with systems that relied on perception, from voice assistants to autonomous vehicles.

The result did not emerge from a single decision. It followed from a combination of architecture, training method and hardware capability. The competition provided a setting in which that combination became visible.

What had been treated as a difficult problem began to appear more tractable. The methods that produced that result are now widely used. The extent to which they can continue to scale remains an open question. What is clear is that the change in 2012 did not remain confined to a single task. It set a direction that has continued to shape the field.