By 1980, artificial intelligence had already disappointed its backers more than once.

Funding had been reduced. Expectations had been revised. The grand claims of earlier decades had not translated into systems that could operate reliably outside controlled settings. For a time, the field appeared to be narrowing rather than expanding.

Then a different approach began to take hold.

Instead of attempting to recreate general intelligence, researchers focused on something more limited and more practical. They began to build systems that could perform a single task with a level of competence comparable to a human specialist. These systems became known as expert systems.

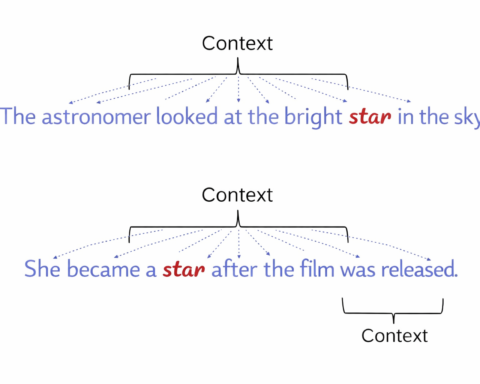

An expert system did not attempt to learn in the way later models would. It relied on a structured body of knowledge. This knowledge was stored as a set of facts and rules, describing how decisions should be made within a specific domain. A separate component, the inference engine, applied those rules to new inputs, producing conclusions based on logical steps. The user interacted with the system through an interface that guided the exchange, often in the form of questions and responses.

The process was direct. A user would provide information, such as symptoms in a medical context or conditions in an engineering problem. The system would compare this input against its stored rules, moving through a chain of reasoning until it arrived at a recommendation. The result was presented as if it had been produced by an expert.

The appeal of this approach was immediate. It did not require the system to understand the world in a broad sense. It required it to perform well within a defined set of conditions. For businesses and institutions, this was sufficient. Decisions that had depended on scarce expertise could be standardised and reproduced.

Several systems demonstrated what could be achieved. MYCIN, developed at Stanford, was able to assist in diagnosing bacterial infections and recommending treatments. DENDRAL, an earlier system, had been used to analyse chemical structures. XCON, deployed by Digital Equipment Corporation, configured computer systems for customers and was credited with reducing errors and saving significant costs. These systems did not resemble general intelligence, but they produced results that were useful.

The timing was favourable. Computing power had improved, making it possible to store and process larger rule sets. Organisations were willing to invest in systems that could reduce reliance on human specialists. Governments also showed interest. Japan’s Fifth Generation Computer Project, launched in 1982, aimed to advance these methods further, reinforcing the sense that artificial intelligence had found a practical direction.

By the mid 1980s, expert systems had become the dominant form of artificial intelligence. They were used in finance, healthcare, manufacturing and defence. Investment increased. Companies formed around the development and deployment of these systems. For a period, artificial intelligence appeared to have secured a place within industry.

The limitations were present from the beginning, though they were not always emphasised. The systems required detailed knowledge to be encoded in advance. This process was time consuming and expensive. As conditions changed, the rules had to be revised, often by the same experts whose work the system was intended to reduce. The more complex the domain, the more difficult this maintenance became.

They also struggled with uncertainty. A rule based system could perform well when the problem matched its structure. When the situation fell outside those rules, the system could not adapt. It did not learn from new examples. It could not extend its knowledge without being reprogrammed.

These constraints became more apparent as the systems were applied more widely. The cost of maintaining them increased. Their inability to adjust to changing conditions limited their usefulness. At the same time, alternative approaches began to emerge, particularly methods based on statistical learning, which offered a different way to handle variability.

By the early 1990s, the momentum had slowed. Many expert systems were withdrawn or replaced. Investment declined, contributing to another period of reduced confidence in artificial intelligence. The approach that had revived the field became associated with its limitations.

Yet the influence of expert systems did not disappear. They established the idea that artificial intelligence could operate within real organisations and produce measurable value. They introduced the concept of capturing expertise in a form that could be applied repeatedly. Elements of their structure remain visible in later systems, particularly in areas where rules and constraints continue to play a role.

The shift that followed moved away from encoding knowledge towards learning from data. That transition defines much of modern artificial intelligence. The period in which expert systems dominated is often treated as an interlude, but it served a purpose. It demonstrated that the field could produce working systems, even if those systems were narrower than the ambitions that preceded them.

The revival of artificial intelligence in 1980 did not restore its earlier claims. It changed them.