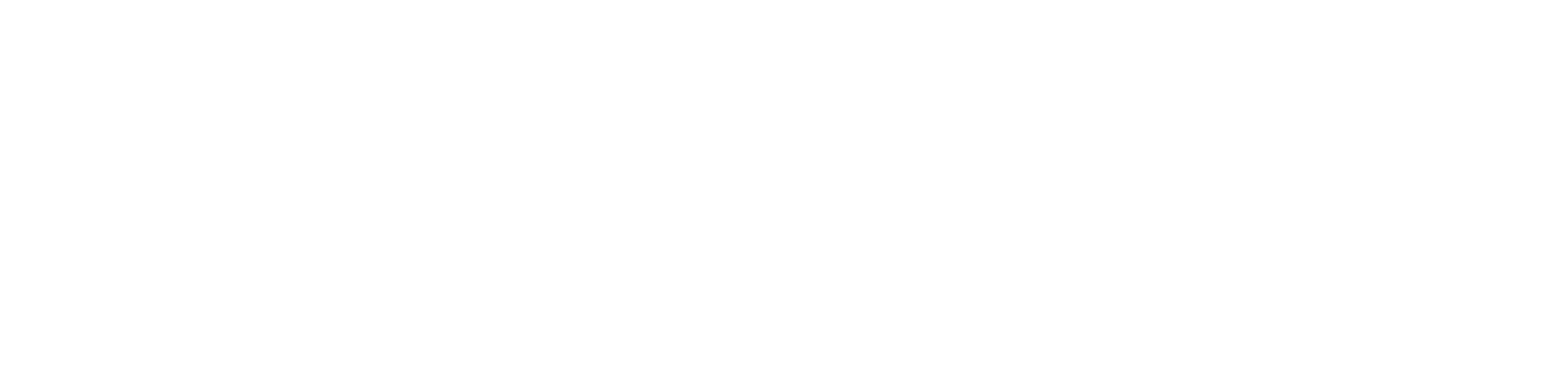

In 1964, computer scientist Joseph Weizenbaum introduced a program called ELIZA. At a time when computers were largely confined to numerical work, this was an unusual departure. ELIZA did not process equations or datasets in the conventional sense. It engaged with language. More precisely, it produced responses that resembled conversation closely enough that many users did not immediately question what they were interacting with.

Its most well known configuration, known as DOCTOR, adopted the manner of a Rogerian psychotherapist. The exchanges were simple and open ended. A user might say they felt sad, and the program would return the question, why do you feel sad today. A complaint about work would prompt a reply asking the user to describe their work in more detail. The responses appeared attentive, even considered, though they followed a narrow and repetitive structure.

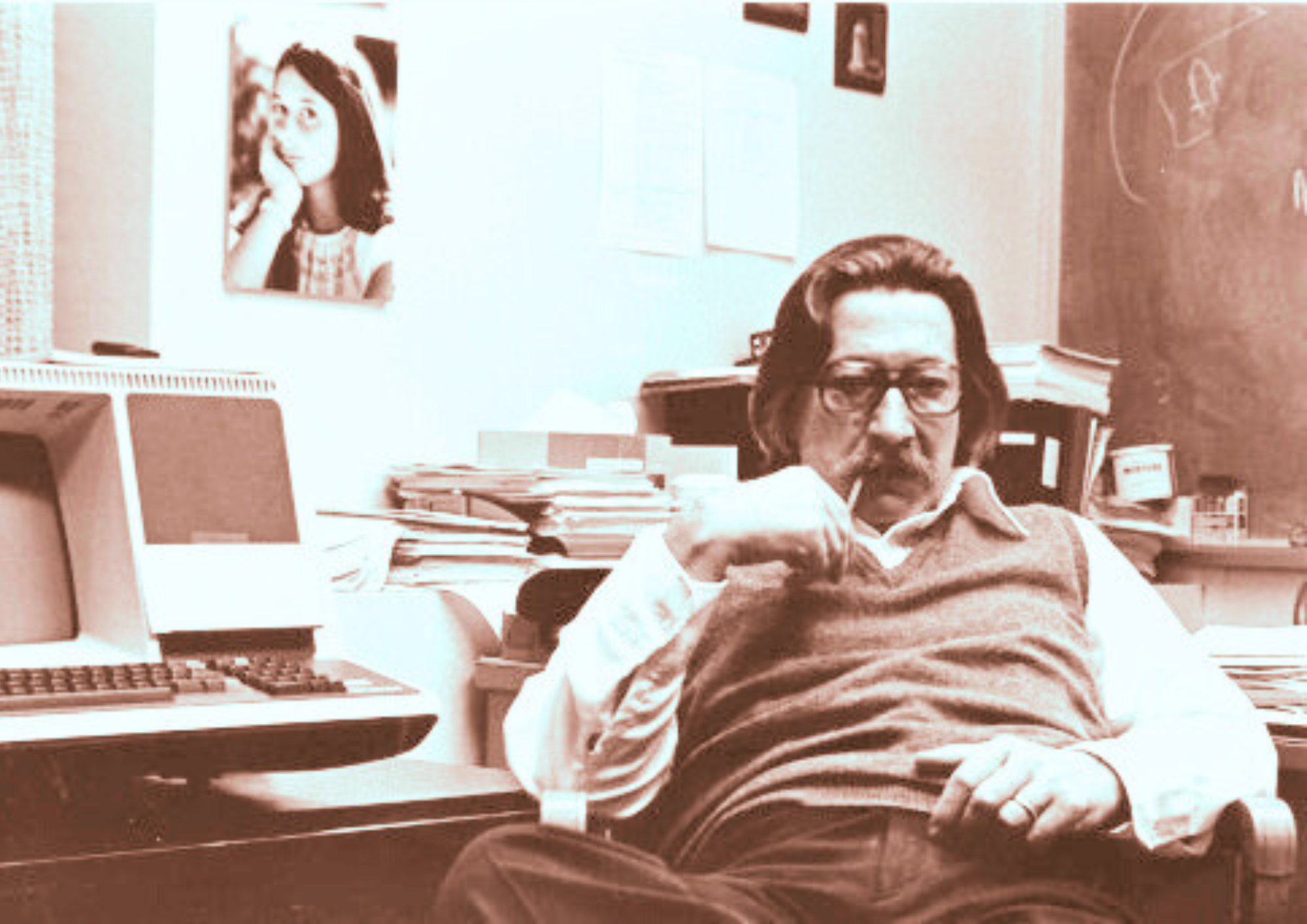

What ELIZA was doing was not understanding. It did not interpret meaning or recognise emotion. It relied on pattern matching and keyword recognition. Words within a sentence were identified, matched against predefined rules, and used to select a response template. Portions of the user’s own language were then reflected back. The effect was conversational, but the mechanism remained entirely mechanical.

The most revealing detail was not the design, but the reaction. Users began to treat the system as though it possessed awareness. Some attributed empathy to it. Others confided in it. The machine was not simply being used. It was being engaged with, as if it were capable of understanding.

This response became known as the ELIZA Effect, the tendency to project intelligence onto systems that produce human like responses. Joseph Weizenbaum himself was unsettled by it. What had been intended as a technical demonstration exposed something less technical and rather more human. The appearance of understanding was, in many cases, sufficient.

The mechanics behind this were limited and precise. ELIZA identified keywords, selected rules, and produced scripted replies. It could not maintain context across a conversation. It could not reason. It could not adapt beyond what had been written into it. When a sentence fell outside its expected patterns, the illusion weakened quickly, often producing responses that were unrelated or generic. Its scope was narrow and easily broken.

Yet this was enough to establish something new. ELIZA showed that language could become a medium of interaction between human and machine, even in the absence of genuine comprehension. It marked the beginning of natural language processing as a practical pursuit. Not because it solved the problem, but because it demonstrated how convincingly the problem could be approximated.

From that point, the line extends forward with increasing complexity. Early chatbot systems followed. Natural language processing developed as a field. Rule based approaches gave way to statistical methods and, later, to machine learning. Systems designed for conversation began to appear in practical settings.

Modern AI systems, including virtual assistants such as Siri, Alexa, and Google Assistant, operate on far more advanced principles. Large language models, including those developed by OpenAI and deployed in systems such as ChatGPT, rely on deep learning, vast datasets, and probabilistic modelling rather than simple pattern matching. They can process context, generate extended responses, and adapt across a wide range of topics.

The applications have expanded accordingly. Chatbots are now used in customer service, handling routine enquiries and support tasks. Natural language processing systems are used for sentiment analysis, document classification, and language translation. AI powered therapy applications, such as Woebot and Wysa, simulate therapeutic conversations using structured dialogue and machine learning. Research into the Turing Test and machine cognition has continued, informed in part by the early observations made through ELIZA.

Despite these advances, the underlying tension remains. ELIZA had no real understanding. It relied entirely on scripted responses. Modern systems are vastly more capable, but the question of understanding has not entirely disappeared. They generate language that appears coherent and meaningful, yet the nature of that meaning is still debated.

ELIZA’s limitations were clear even at the time. It could not interpret complex input. It could not sustain a consistent line of conversation. It would revert to generic prompts when faced with unfamiliar phrasing. Its behaviour was constrained by design. These weaknesses were not flaws in execution. They were the boundaries of the system itself.

Its legacy lies elsewhere. It demonstrated that simple rule based systems could produce behaviour that appeared intelligent. It revealed that users would often supply meaning where none existed. It established language as a viable interface between human and machine.

What began as a modest experiment altered expectations. It showed that machines did not need to understand in order to be treated as if they did.

And once that was observed, the direction of artificial intelligence changed.