There was a moment, not long after the birth of artificial intelligence, when it seemed the age of thinking machines had already begun.

Machines would translate languages, hold conversations, solve problems, perhaps even rival human reasoning within a generation. The confidence was not quiet. It was public, funded, and, as it turned out, premature.

By 1966, that confidence had begun to drain away.

The early period of artificial intelligence, stretching from the mid 1950s into the early 1960s, produced just enough success to be convincing. The Dartmouth Conference had formally introduced AI as a field. Programs could play games, prove simple theorems, and simulate fragments of conversation. ELIZA gave the impression, at least to casual observers, that a machine might understand language. The Perceptron suggested that machines might learn from data.

Governments, particularly in the United States, took notice. Agencies such as DARPA funded research with the expectation that intelligent machines would have military and strategic value. The mood was expansive. The claims were, in some cases, extravagant.

Then the systems met the real world.

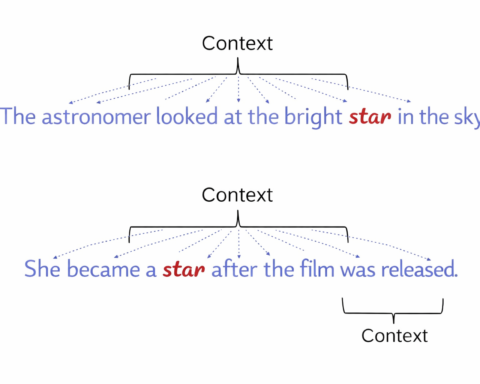

What worked in controlled demonstrations failed under ordinary conditions. Language programs could rearrange phrases but did not understand meaning. Early translation efforts, funded in part by Cold War urgency, produced results that were often unusable. The problem was laid bare in the ALPAC Report, which concluded that machine translation had not delivered on its promises and was unlikely to do so soon. Funding was cut almost immediately.

The difficulty ran deeper than language. Early AI systems could solve tightly defined problems, but they could not cope with ambiguity, context, or scale. A program that could play a passable game of chess could not describe a simple scene or navigate an ordinary room. Human reasoning, it became clear, was not a single problem waiting to be solved but a collection of problems, each stubborn in its own way.

Even the most promising techniques showed limits. The perceptron, once heralded as a path to machine learning, could handle only the simplest patterns. When Marvin Minsky and Seymour Papert later demonstrated its theoretical constraints, enthusiasm cooled further. What had seemed like a foundation began to look like a dead end.

The more immediate issue, however, was expectation. Early researchers had suggested that human level intelligence might be achieved within a couple of decades. By the mid 1960s, the gap between those claims and observable progress had become difficult to ignore.

Funding bodies, rarely sentimental, adjusted accordingly.

In the United States, support for large scale language projects was withdrawn after the ALPAC findings. In Britain, government backing weakened as confidence declined. By the early 1970s, the contraction had spread. Projects were scaled back or closed. Researchers moved into adjacent fields such as statistics, operations research, or conventional computing. The term artificial intelligence itself became, for a time, something to avoid.

This period came to be known as the first AI winter. It was not a total halt, but it was a clear retreat.

The effects were practical and immediate. Laboratories reduced their ambitions or disappeared. Investment, both public and private, shifted elsewhere. Neural networks, in particular, were largely abandoned, not because they were disproven in principle, but because they could not yet be made to work at scale with the available data and computing power.

And yet, the field did not die. It narrowed.

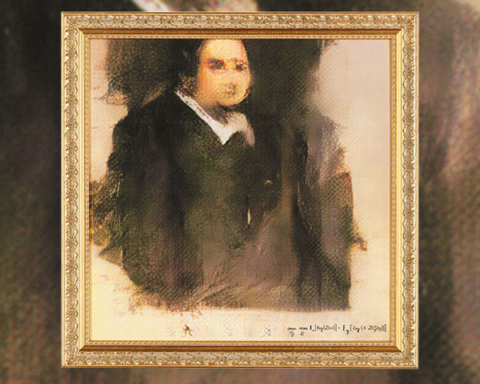

In the absence of grand claims, researchers began to focus on problems that could be solved. By the late 1970s, this led to the rise of expert systems, programs designed to operate within tightly defined domains using explicit rules. These systems lacked the generality that earlier visions had promised, but they worked well enough to be useful. They marked the beginning of AI’s first sustained commercial applications.

At the same time, advances in hardware slowly changed the landscape. More powerful computers made it possible to revisit ideas that had previously failed for practical reasons. Neural networks, set aside after the disappointments of the 1960s, would eventually return in a more capable form decades later.

The first AI winter left a particular kind of legacy. It did not disprove the possibility of intelligent machines. It exposed the cost of assuming they were close at hand.

The lesson, if one can call it that, is not especially dramatic. Progress in this field tends to arrive unevenly. Breakthroughs are often followed by long periods of consolidation. The difficulty is not in demonstrating that something can be done in principle, but in making it work reliably, at scale, and under the untidy conditions of the real world.

By the time artificial intelligence regained momentum in the 1980s, the tone had changed. There was still ambition, but less certainty about timelines. The field had learned, through experience rather than theory, that intelligence was not a single threshold to be crossed, but a landscape to be navigated.