There was a point, in the late 1950s, when the idea of a machine learning from experience began to move from speculation into something more concrete. It did not arrive fully formed. It appeared in a form that was simple, almost disarmingly so, yet carried implications that would take decades to fully unfold.

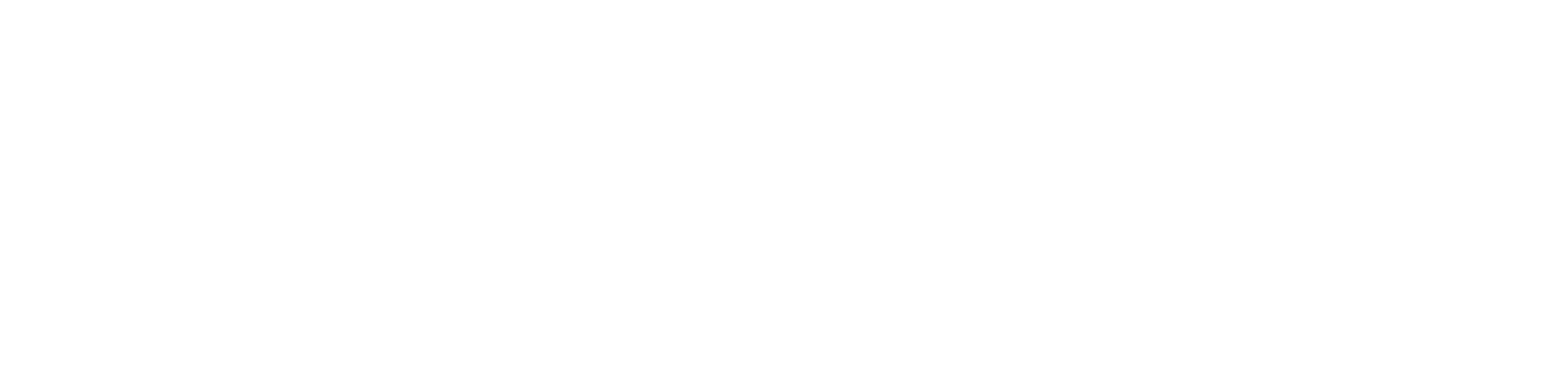

In 1958, the American psychologist and computer scientist Frank Rosenblatt introduced the Perceptron, an early model of artificial neural networks. At the time, computing was still largely concerned with calculation and instruction. The Perceptron suggested something different. It suggested that a machine might adjust itself.

Rosenblatt’s work marked one of the first serious attempts to create systems that could learn from data rather than follow fixed rules. The idea was direct. If a system could be shown examples, and could adjust its internal parameters when it made errors, then it might improve. That premise would later underpin developments in computer vision, natural language processing, and autonomous decision making, though in 1958 it remained both limited and uncertain.

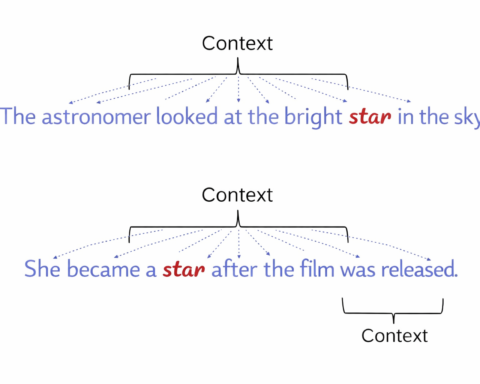

The Perceptron itself was structurally simple. It was designed as the most basic form of an artificial neural network, inspired loosely by biological neurons. Inputs were received, each assigned a weight to indicate importance. These weighted inputs were summed, and the result was passed through an activation function. If the total exceeded a certain threshold, the system produced an output of one. If it did not, the output remained zero.

This binary behaviour allowed the Perceptron to perform classification. It could distinguish between categories, provided those categories could be separated in a particular way. It was used, in early demonstrations, to recognise simple shapes, to differentiate between basic patterns, and to perform elementary forms of decision making. Tasks such as identifying shapes in images, distinguishing between spam and non spam messages, or recognising simple spoken inputs were within its reach, though only in highly constrained forms.

What made the system notable was not its performance, but its method. Each input was multiplied by a weight. The results were summed. The output was determined by whether the threshold was crossed. When the system made an error, the weights were adjusted. This adjustment process, later formalised as the Perceptron Learning Algorithm, allowed the model to be trained using labelled data. It did not simply execute instructions. It changed.

This was enough to attract attention. The Perceptron was presented as a machine that could learn. It introduced the idea that artificial systems could approximate aspects of human cognition through adaptation. It also attracted funding. The United States Navy supported the work, anticipating applications in image recognition and automated decision systems. It was one of the earliest examples of significant government investment in artificial intelligence.

At the time, the implications appeared substantial. The Perceptron demonstrated that machines could adjust their behaviour based on input data. It suggested that learning could be mechanised. It introduced the concept of artificial neurons, which would later form the basis of neural networks. For a brief period, it seemed to offer a path toward more general intelligence.

Then the limitations became clear.

In 1969, Marvin Minsky and Seymour Papert published a detailed critique in their book Perceptrons. Their analysis did not merely point out minor weaknesses. It identified structural constraints. The Perceptron could only solve problems that were linearly separable. It could draw a boundary, but only a straight one. Problems that required more complex separation, such as the XOR function, could not be solved within its architecture.

The model was also limited to a single layer. Without additional layers, it lacked the capacity to represent more complex relationships. These were not issues of scale or refinement. They were fundamental.

The effect was immediate and lasting. Confidence in neural networks declined. Funding was reduced. Research shifted toward rule based systems and symbolic approaches. This period would later be recognised as part of the first AI winter, extending through the 1970s and into the early 1980s. The Perceptron, which had briefly suggested a new direction, became associated instead with a line of inquiry that had failed to deliver.

Yet the underlying idea did not disappear.

In the decades that followed, researchers returned to the concept with modifications. The introduction of hidden layers led to the development of multi layer perceptrons. These structures allowed systems to model more complex relationships and overcome the limitations identified earlier. Advances in computing power, data availability, and training methods gradually transformed what had once been a simple model into something far more capable.

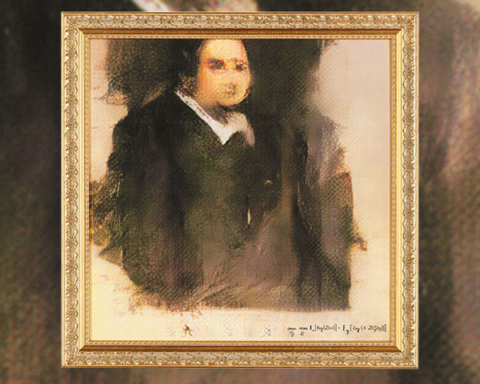

By the 2010s, deep learning had emerged as a dominant approach within artificial intelligence. Neural networks with many layers were used to process images, recognise speech, translate languages, and generate text. Systems such as ChatGPT, DALL·E, and AlphaGo operate on principles that can be traced, in simplified form, back to the original Perceptron.

The structure has become more complex. The scale has increased beyond anything Rosenblatt could have anticipated. Yet the essential mechanism remains recognisable. Inputs are weighted. Signals are combined. Outputs are produced. Parameters are adjusted through learning.

The Perceptron’s role, then, is not defined by its immediate success, but by what it introduced. It established that machines could be trained. It provided a framework for thinking about learning as a computational process. It showed that behaviour could be improved through adjustment rather than instruction.

Its limitations were real, and for a time they halted progress. But they also clarified the problem. They revealed what was missing, and in doing so, they pointed toward what would be required.

Rosenblatt’s original model now appears modest when set against modern systems. It could not solve complex problems. It could not represent deep relationships. It could not operate beyond narrow constraints. Yet without it, much of what followed would not have taken shape in the same way.

The idea that a machine might learn did not arrive fully developed. It began with a threshold, a sum, and a simple adjustment.