There was no single machine, no sudden invention, no decisive breakthrough. Instead, there was a gathering. A small group, a summer, and a proposition that seemed, at the time, both precise and quietly ambitious.

In the summer of 1956, at Dartmouth College, a group of scientists met for what would become known as the Dartmouth Summer Research Project on Artificial Intelligence. It lasted six weeks. It was informal, exploratory, and, in its immediate outcomes, rather modest. Yet it marked the moment when artificial intelligence became something more than scattered lines of inquiry. It became a field.

Before that summer, the work existed, but not the discipline. Research into machine learning, cybernetics, formal logic, and neural networks was already underway, but it was dispersed across mathematics, psychology, and early computer science. There was no unifying framework. No shared terminology. No central objective that gathered these efforts into a single direction.

That began to change with a proposal.

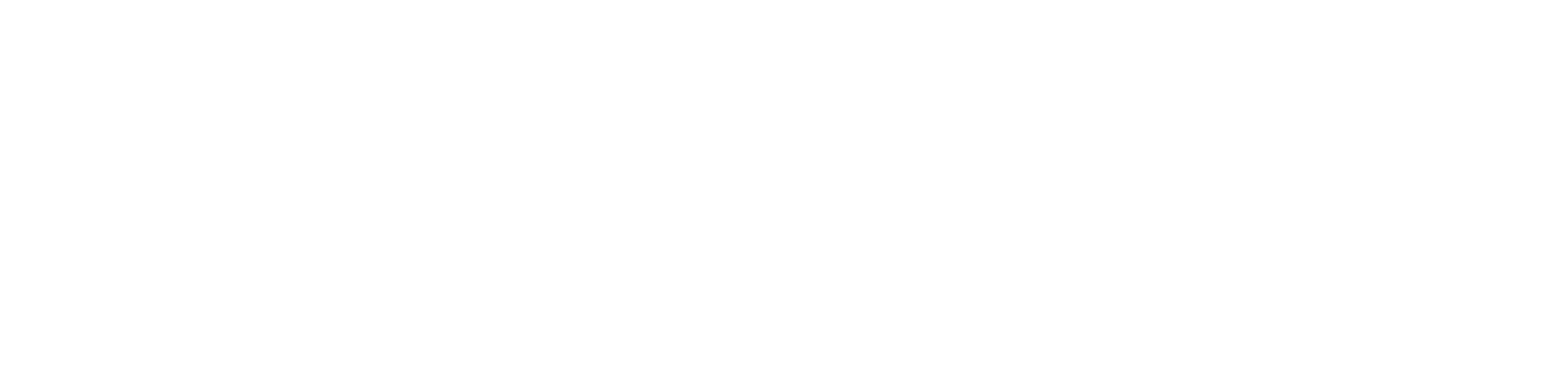

In 1955, John McCarthy, then a young assistant professor at Dartmouth, working alongside Marvin Minsky, Nathaniel Rochester, and Claude Shannon, submitted a request for funding to the Rockefeller Foundation. Within that document, McCarthy introduced a term that had not previously been formalised. Artificial intelligence.

He defined it in direct terms. A study of how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves. The wording was clear. The implication was far reaching.

The proposal secured funding. The workshop was arranged. The expectation, stated with some confidence, was that significant progress might be achieved within a few decades. That expectation would prove optimistic, but it set the tone for what followed.

The group that gathered at Dartmouth was small but consequential. McCarthy himself would go on to develop Lisp, a programming language closely associated with AI research. Minsky would become a central figure in artificial intelligence and robotics, later co founding the MIT AI Lab. Rochester had been instrumental in the design of the IBM 701, one of the earliest computers capable of supporting such work. Shannon had already established the foundations of information theory, shaping how data and communication would be understood.

They were joined by others who would become equally influential. Herbert Simon and Allen Newell, who were already working on computational models of reasoning. Ray Solomonoff, whose work would later inform probabilistic approaches to machine learning. The group did not represent a single discipline. That was, in part, the point.

The discussions that followed were not structured in the manner of modern conferences. There were no formal proceedings in the contemporary sense. Instead, there was a sustained exchange of ideas. The central question was whether intelligence, in its various forms, could be described in such a way that a machine might reproduce it.

Several themes emerged.

There was an attempt to define the scope of artificial intelligence itself. The group proposed that intelligence could be simulated using formal logic, probability, and computational processes. The emphasis, at that stage, leaned toward symbolic reasoning. Intelligence was approached as something that could be expressed through rules and representations.

At the same time, there were early demonstrations of what such systems might look like. Newell and Simon introduced the Logic Theorist, a program designed to mimic aspects of human problem solving. It was capable of proving mathematical theorems. Not quickly, not broadly, but sufficiently to demonstrate that reasoning, in at least one domain, could be mechanised.

There were also disagreements. The relative merits of symbolic approaches and neural networks were debated. At the time, symbolic AI appeared more tractable, more aligned with existing computational methods. Neural networks, though discussed, would fall out of favour for a period before returning decades later with renewed force.

Throughout these discussions ran a consistent undercurrent of expectation. Some participants believed that machines capable of human level reasoning might be developed within a relatively short time. The assumption was that intelligence, once properly described, could be implemented.

The conference did not produce a single defining breakthrough. There was no device, no algorithm, no immediate transformation. What it produced instead was coherence. It gave the field a name, a direction, and a set of shared assumptions.

From that point onward, artificial intelligence could be pursued as a distinct area of research.

The effects were not immediate, but they were cumulative. Universities began to establish dedicated AI programmes. Funding, both governmental and private, followed. Research into problem solving, language processing, and learning systems expanded. Early programs such as the Logic Theorist were followed by systems like the General Problem Solver, extending the attempt to formalise reasoning.

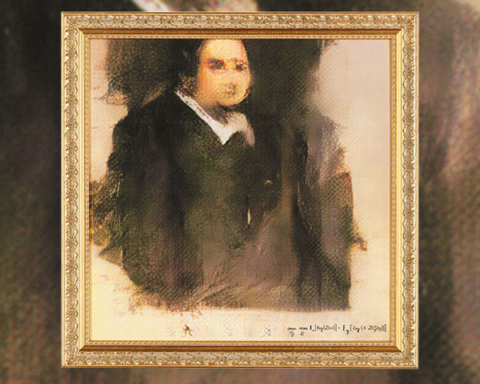

In time, this line of work led to the development of expert systems, rule based programs designed to replicate decision making in specific domains. Later, the revival of neural networks in the 1980s and their expansion in the 2010s would introduce new methods, drawing on data rather than predefined rules. Machine learning, deep learning, and reinforcement learning would emerge as dominant approaches.

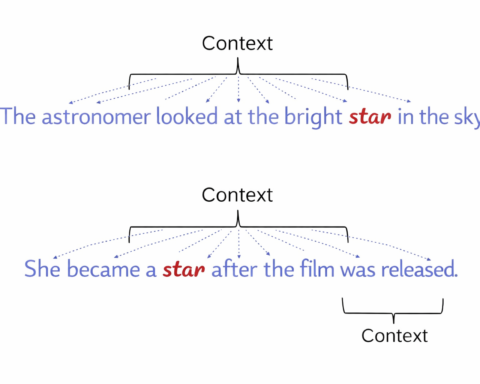

The applications broadened accordingly. Natural language processing gave rise to systems such as ChatGPT, Siri, and Alexa. Autonomous systems appeared in the form of self driving vehicles and robotics. AI driven automation began to reshape industries.

Yet the trajectory was not continuous.

The early optimism that followed Dartmouth gave way, in time, to periods of retrenchment. The first AI winter in the 1970s reflected the growing recognition that the problems were more difficult than anticipated. Progress slowed. Funding declined. A second period of reduced confidence followed in the late 1980s and early 1990s, when expert systems failed to deliver on their initial promise.

Each of these periods forced a reassessment. Each exposed the limits of prevailing approaches. And each, in time, contributed to the development of more robust methods.

By the early twenty first century, advances in computing power, the availability of large datasets, and improvements in algorithm design enabled a renewed expansion. Artificial intelligence returned, not as a speculative idea, but as a set of working systems embedded in everyday technology.

The Dartmouth Conference, viewed from this distance, appears less as a breakthrough and more as a point of origin. It did not solve the problem of intelligence. It framed it. It brought together a set of questions and gave them a shared language.

The ambition expressed in that summer has not yet been fully realised. Machines can process language, recognise images, and perform complex tasks. They can write, compose, and analyse. Yet the broader objective, the replication of human level intelligence in its entirety, remains unresolved.

What Dartmouth established was not an answer, but a direction.

And once that direction had been set, the work did not stop.