There was a point, before the systems, before the industry, before the scale, when the problem was not how to build artificial intelligence, but how to describe it.

By the mid 1950s, machines could calculate, store information, and follow instructions with increasing reliability. What they could not do, at least not in any agreed sense, was think. And yet, across several disciplines, the idea persisted that they might. Work in neuroscience, mathematics, cybernetics, and computing had begun to circle the same question from different directions. The difficulty was not a lack of activity. It was the absence of a single field.

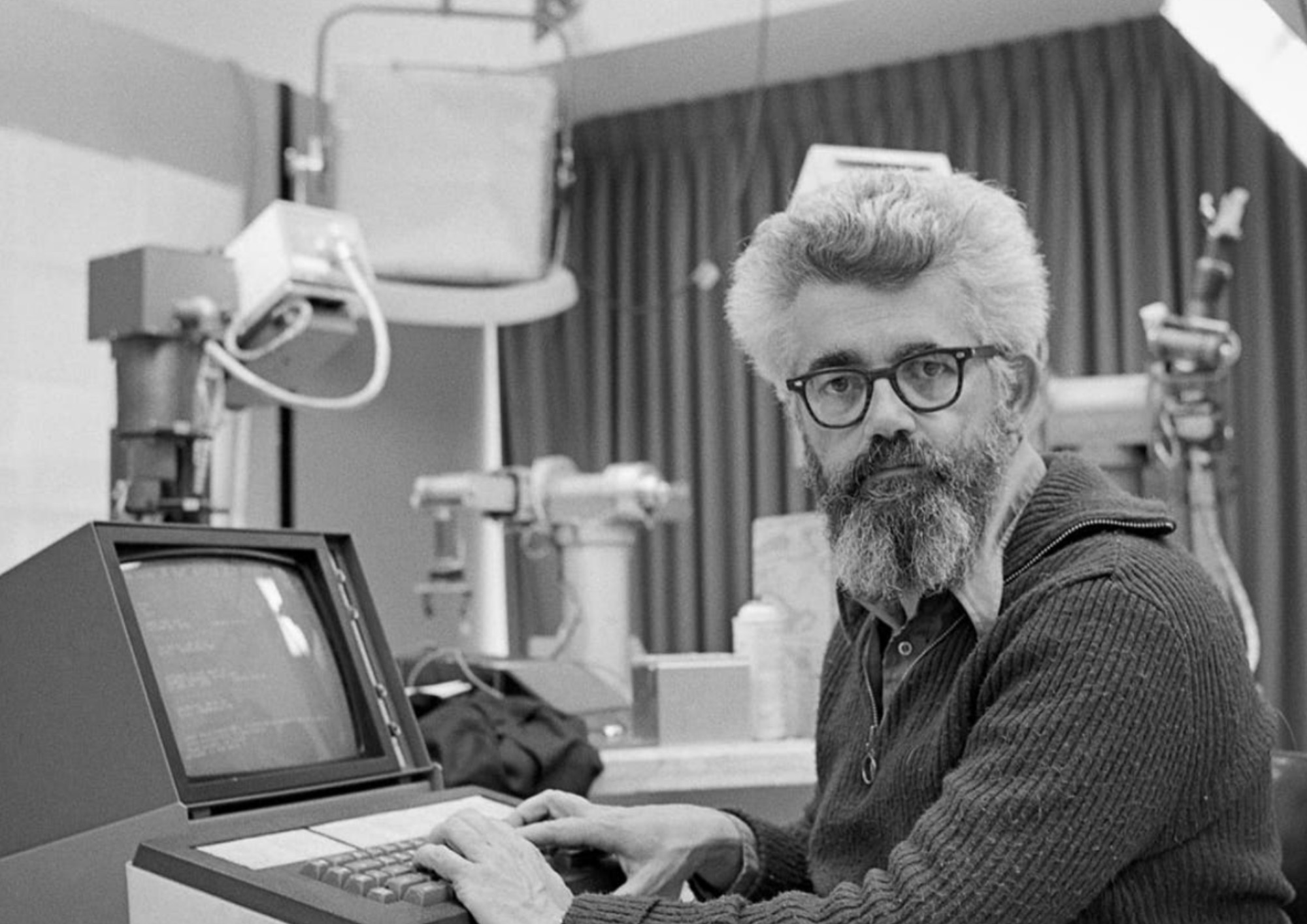

In 1955, John McCarthy proposed a solution that was at once simple and consequential. He gave the idea a name.

Working alongside Marvin Minsky, Nathaniel Rochester, and Claude Shannon, McCarthy submitted a proposal for a summer research project at Dartmouth College. Within that proposal, the term artificial intelligence was introduced and defined in direct terms as the study of how to make machines use language, form abstractions and concepts, solve kinds of problems now reserved for humans, and improve themselves.

It was not merely a description. It was a claim that such a study was possible.

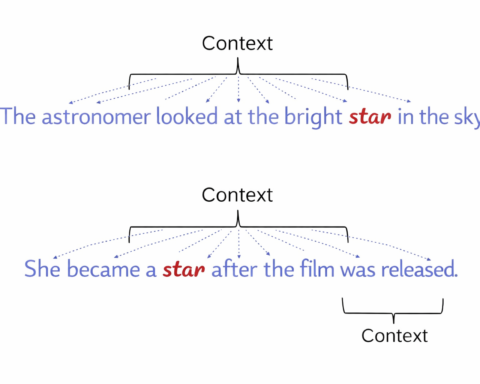

Before this point, the work had been fragmented. Some researchers referred to cybernetics, following the language of Norbert Wiener. Others spoke of automata theory or complex information processing. There was no agreement, and perhaps more importantly, no shared direction. McCarthy rejected more speculative language such as thinking machines. His choice was deliberate. Intelligence, as he framed it, did not require human like consciousness. It required the production of intelligent results.

The proposal secured funding. The term remained. And in the summer of 1956, the Dartmouth Summer Research Project on Artificial Intelligence convened, bringing together a small group of researchers who would, in time, define the field.

Among them were figures who would later shape the direction of artificial intelligence. Marvin Minsky would go on to co found the MIT AI Lab. Claude Shannon had already established the principles of information theory that underpinned modern computing. Herbert Simon and Allen Newell, present at the conference, would develop early problem solving programs. Simon would later receive a Nobel Prize for work that extended into artificial intelligence. The group was small, but the influence was disproportionate.

The gathering itself was not structured in the manner of modern conferences. It was exploratory. Ideas were proposed, debated, and often left unresolved. There was no single breakthrough. What emerged instead was a shared assumption that intelligence could, in principle, be formalised and reproduced through computation.

From that point, artificial intelligence became a recognised area of study.

The consequences followed gradually. Universities began to establish programmes dedicated to AI. Funding, both governmental and industrial, increased. Research expanded into machine learning, robotics, and logic based reasoning. Early systems such as the Logic Theorist and the General Problem Solver were developed, demonstrating that machines could perform tasks associated with reasoning, albeit within narrow constraints.

The act of naming had altered the trajectory.

By defining artificial intelligence as a field, McCarthy and his colleagues provided a framework that attracted researchers and resources. It shifted the idea from speculation into organised inquiry. It created a long term objective, even if that objective was not yet clearly attainable.

The effects extended beyond academia. Over time, artificial intelligence moved from research into application. Expert systems emerged in the 1960s, applying rule based reasoning to practical problems. Machine learning developed further in the 1980s, introducing methods that allowed systems to adapt based on data. By the 2000s, deep learning techniques enabled more complex forms of pattern recognition and decision making.

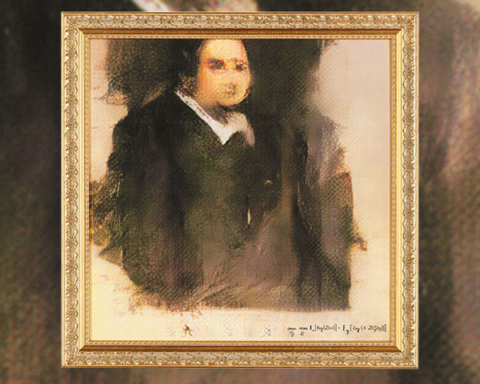

In the present, artificial intelligence operates at a scale that would have been difficult to anticipate in 1955. Search engines, including those developed by Google and Microsoft, rely on AI driven ranking and retrieval. Virtual assistants such as Siri and Alexa process natural language and respond in real time. Autonomous vehicles apply machine learning to navigation and control. Systems such as ChatGPT and DALL·E generate text and images with a degree of fluency that has moved beyond simple automation.

What began as a term has become an industry.

McCarthy’s own contributions did not end with the naming of the field. In 1958, he developed the Lisp programming language, which became closely associated with AI research. He contributed to the development of time sharing systems, allowing multiple users to interact with computers simultaneously. He worked on reasoning systems and early applications of artificial intelligence. He also proposed, at an early stage, that AI might play a role in space exploration, assisting astronauts and managing complex systems.

These contributions reinforced the idea that artificial intelligence was not a single problem, but a collection of related ones.

The decision to define the field in 1955 remains one of the most consequential moments in its history. It provided a focal point for research. It attracted funding and attention. It created a common language through which progress could be measured.

Yet the original ambition remains only partially fulfilled.

Artificial intelligence today can recognise images, process language, generate content, and assist in decision making across a wide range of domains. It powers self driving cars, chatbots, medical diagnostics, and automated systems in industry. Neural networks and deep learning have extended its capabilities far beyond early expectations.

What it has not yet achieved, at least not in full, is the general intelligence that the original proposal implied.

The term remains. The work continues. And the definition, set down in 1955, still frames the problem.

It is, perhaps, the most enduring part of the project.