Artificial intelligence is now a dominant force in strategy games, from machines that can outplay world champions at chess to AI driven opponents embedded in modern video games. Yet the origins of this capability lie in a far quieter moment, when the question was not how powerful such systems might become, but whether a machine could make a decision at all.

In 1951, two early programs answered that question in the affirmative.

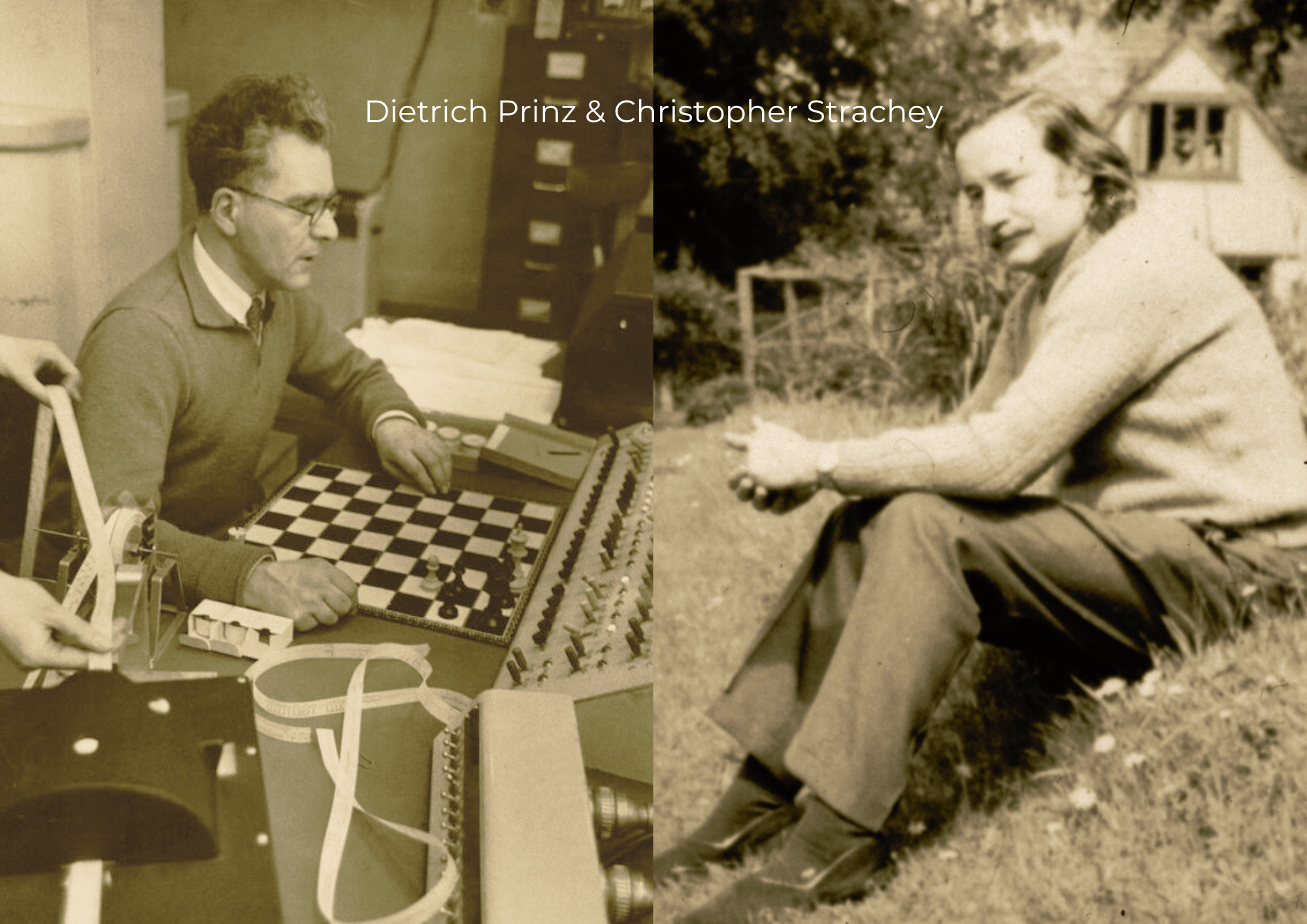

One was written by Christopher Strachey, a British pioneer who would later help shape modern programming language design. The other came from Dietrich Prinz, working in close proximity to the ideas of Alan Turing at the University of Manchester. Together, though independently, they produced the first artificial intelligence programs capable of handling strategy in games, one for draughts and one for chess.

They did not resemble modern systems. They did not learn, adapt, or generalise. But they made choices. And that was new.

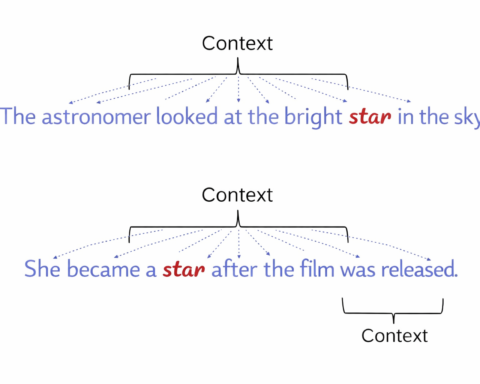

Strachey’s program, developed for the Ferranti Mark I, one of the earliest commercial computers, was designed to play checkers. It operated by examining the legal moves available in a given position and selecting among them using a simple scoring method. The system did not understand the game in any human sense. Instead, it evaluated positions numerically, assigning relative value to outcomes and selecting moves that improved its standing.

What appears straightforward now was, at the time, a departure. The machine was not simply executing a fixed sequence of instructions. It was choosing between alternatives based on a defined objective.

The method relied on what would later be called heuristic search. Rather than exhaustively exploring every possible future position, the program applied rules of thumb to guide its decisions, narrowing the field of possibilities. This allowed it to operate within the severe computational limits of the period.

The significance of Strachey’s work lies not in the strength of the program, which was modest, but in the demonstration it provided. A computer could evaluate a situation and select a course of action.

At roughly the same time, Prinz approached a different problem. His chess program, also running on the Ferranti Mark I, did not attempt to play full games. The constraints of the hardware made that impractical. Instead, it focused on solving specific positions, particularly checkmate puzzles.

The method was more direct. Prinz’s system used brute force search, examining sequences of moves until it found a solution that led to checkmate. Given enough time, and a sufficiently limited position, it could identify a winning line.

This was not strategy in the broader sense. It was calculation. Yet it established something important. A machine could analyse a structured problem space and arrive at a correct conclusion through systematic exploration.

In both cases, the programs operated within tightly defined boundaries. The environments were controlled. The rules were fixed. The scope was limited. But within those limits, they demonstrated that decision making could be formalised.

This had immediate implications.

First, it showed that games could serve as a testing ground for artificial intelligence. Chess and draughts offered clear rules, measurable outcomes, and well defined objectives. They provided a way to study reasoning in a contained setting.

Second, it introduced the idea that intelligence, or at least a version of it, could be reduced to processes that machines could execute. Evaluation, selection, and planning could be expressed in computational terms.

Third, it established a line of development that would continue for decades.

Strachey’s work would influence later efforts, including the self improving checkers program developed by Arthur Samuel in 1956, which introduced learning into the process. Prinz’s approach to chess would be extended through increasingly sophisticated search techniques, eventually leading to systems capable of competing at the highest levels.

By 1997, IBM’s Deep Blue would defeat Garry Kasparov, marking a turning point in public perception of machine intelligence. Two decades later, systems such as AlphaZero would move beyond brute force, learning strategies through self play and generalising across games.

The connection to those early programs is direct.

The techniques first explored in 1951 evolved into core components of artificial intelligence. Search algorithms became central to problem solving across domains. Heuristic evaluation developed into more advanced forms of optimisation. The idea of representing decisions as a sequence of evaluated alternatives now underpins systems used far beyond games.

Today, similar principles appear in areas that bear little resemblance to draughts or chess. Financial modelling, robotics, autonomous vehicles, and large scale optimisation all rely on methods that trace their lineage back to these early experiments.

Modern AI systems, including those used by Google and others, extend these ideas with vast datasets, probabilistic models, and layered neural architectures. Yet at their core, they still perform a familiar operation. They assess possibilities and choose among them.

What Strachey and Prinz established was not that machines could play games well, but that they could participate in the process of decision making at all.

It was a modest beginning. The programs were limited, the machines slow, and the results narrow in scope. But the principle held.

A machine, given a defined problem and a structured method, could decide.

Everything that followed, in one form or another, builds on that fact.