Artificial intelligence and deep learning now sit at the centre of modern technological life, shaping everything from facial recognition and language translation to autonomous vehicles and medical diagnostics. Beneath these systems lies a structure that appears almost biological in its ambition. The artificial neural network.

Its origins, however, are not recent. They reach back to 1943, to a paper that reads less like engineering and more like a quiet act of intellectual provocation.

In A Logical Calculus of the Ideas Immanent in Nervous Activity, Warren McCulloch and Walter Pitts set out to answer a question that, even now, retains a certain unease.

Could thought itself be reduced to logic.

McCulloch, trained in neurophysiology and psychiatry, was preoccupied with how the brain processed information. Pitts, largely self taught, brought with him a rare command of formal logic. Their collaboration was unlikely, but precise. Together, they approached the brain not as a mystery, but as a system that might be described.

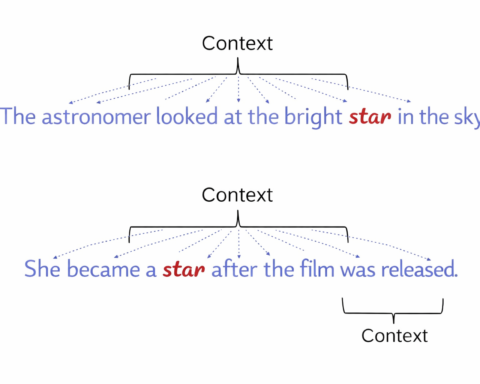

Their model was stark in its simplicity. They proposed an artificial neuron that operated as a binary unit. It was either active or inactive. One or zero. Inputs arrived from other neurons, each carrying a certain weight. These inputs were summed, and if the total exceeded a defined threshold, the neuron would fire.

Nothing more elaborate than that.

And yet, within this minimal structure, something rather important emerged.

They demonstrated that networks of such neurons could perform Boolean logic. Operations such as AND, OR, and NOT could be constructed from these simple units. A neuron could be made to activate only when two conditions were met, or when at least one was present, or to invert a signal entirely.

This was not merely a model of the brain. It was a model of computation.

For the first time, the possibility was laid out with some clarity. If neurons could be expressed as logical units, then networks of neurons could compute. And if they could compute, then the processes associated with thought might not be beyond formal description.

It is difficult, at this distance, to appreciate how disruptive that idea was.

Before 1943, the brain and the machine occupied different categories. One belonged to biology, the other to engineering. McCulloch and Pitts bridged that divide, not by speculation, but by demonstration. They showed that the two might share a common language.

The implications did not unfold immediately. The model itself had clear limitations. It did not learn. The weights assigned to inputs were fixed. There was no mechanism for adaptation, no method for adjusting behaviour based on experience. Real neurons, of course, are vastly more complex than the binary abstraction they proposed. The architecture was static, and the dynamics of learning were absent.

But the foundation had been set.

Their work directly influenced later developments, most notably the Perceptron introduced by Frank Rosenblatt in 1958. There, the crucial step was added. The ability to adjust weights in response to error. Learning, in a limited form, entered the system.

From that point, the trajectory becomes easier to trace. Multi layer networks emerged. Backpropagation, developed decades later, allowed networks to refine themselves across many layers. What began as a binary abstraction evolved into structures capable of modelling highly complex relationships.

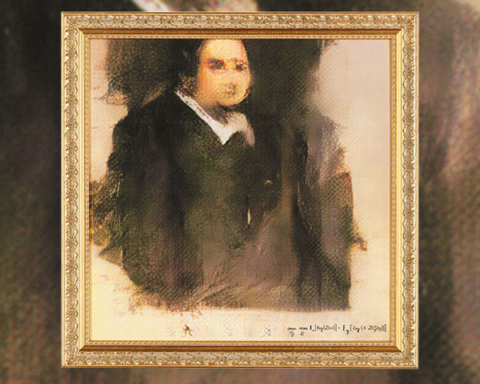

Today, these ideas underpin systems developed by organisations such as Google and others. Deep learning models generate language, recognise images, and make decisions in environments that would have been inconceivable to early researchers. Speech recognition, computer vision, and medical diagnostics all rely, in one way or another, on the principle first articulated in 1943.

The connection is not merely historical. It is structural.

Modern neural networks, for all their scale and complexity, still operate on the same essential premise. Units receive weighted inputs, aggregate them, and pass the result forward if a condition is met. Layers of such units form networks. Networks produce behaviour.

The elegance of the original model remains visible, even beneath the layers of refinement.

It is also worth noting what McCulloch and Pitts did not claim. They did not assert that they had captured the full nature of the brain. Nor did they suggest that their model was sufficient to produce intelligence. They proposed, instead, that a certain aspect of neural activity could be described in logical terms.

That restraint gives the work its durability.

Their contribution was not a complete theory, but a starting point. A way of thinking about thinking that could be expressed, tested, and extended.

The limitations of their model are often cited. It lacked learning. It simplified biology. It offered no account of how networks might organise themselves over time. All of this is true. And yet, without that initial simplification, the field might not have advanced at all.

There is a certain discipline in reducing a complex system to its essentials, provided one does not mistake the reduction for the whole.

McCulloch and Pitts did not make that mistake.

They asked whether thought could be represented as a system of logic. They showed that, at least in part, it could. Everything that followed, from the Perceptron to modern deep learning systems such as GPT models and image generators, builds upon that initial proposition.

What now appears as a vast and commercially significant field began, in effect, with a binary decision.

On or off.

From that, the machinery of modern artificial intelligence has been assembled.